Video dubbing requires content accuracy, expressive prosody, high-quality acoustics, and precise lip synchronization, yet existing approaches struggle on all four fronts. To address these issues, we propose DiFlowDubber, the first video dubbing framework built upon a discrete flow matching backbone with a novel two-stage training strategy. In the first stage, a zero-shot text-to-speech (TTS) system is pre-trained on large-scale corpora, where a deterministic architecture captures linguistic structures, and the Discrete Flow-based Prosody-Acoustic (DFPA) module models expressive prosody and realistic acoustic characteristics. In the second stage, we propose the Content-Consistent Temporal Adaptation (CCTA) to transfer TTS knowledge to the dubbing domain: its Synchronizer enforces cross-modal alignment for lip-synchronized speech. Complementarily, the Face-to-Prosody Mapper (FaPro) conditions prosody on facial expressions, whose outputs are then fused with those of the Synchronizer to construct rich, fine-grained multimodal embeddings that capture prosody-content correlations, guiding the DFPA to generate expressive prosody and acoustic tokens for content-consistent speech. Experiments on two benchmark datasets demonstrate that DiFlowDubber outperforms prior methods across multiple evaluation metrics.

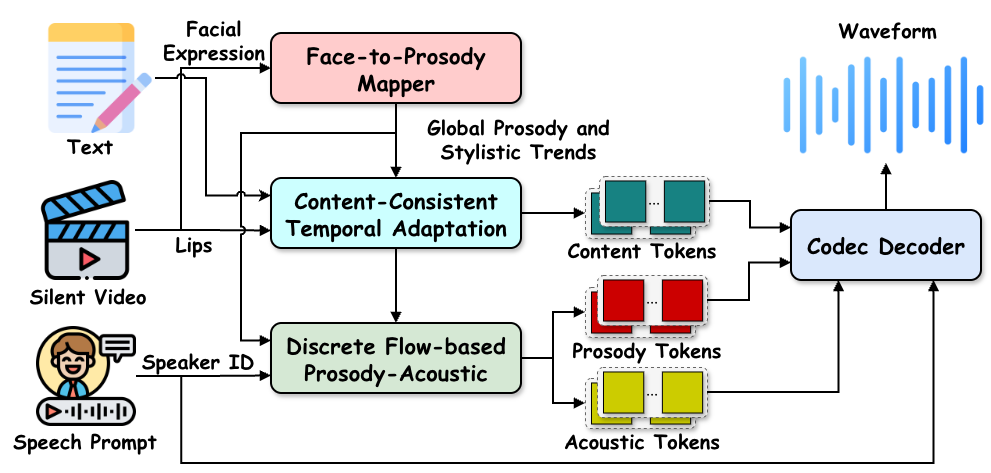

Figure 1: Overall inference pipeline of DiFlowDubber. The Face-to-Prosody Mapper module predicts prosody priors that capture global prosody and stylistic cues from facial expressions. The Content-Consistent Temporal Adaptation module generates discrete content tokens conditioned on lip movements, text, and prosody priors, ensuring consistent with the target text transcription and temporal alignment. Discrete Flow-based Prosody-Acoustic module generates diverse yet globally consistent prosody tokens under the guidance of the prosody prior, together with corresponding acoustic tokens. The speech waveform is synthesized from the predicted tokens and speaker embedding via a Codec Decoder.

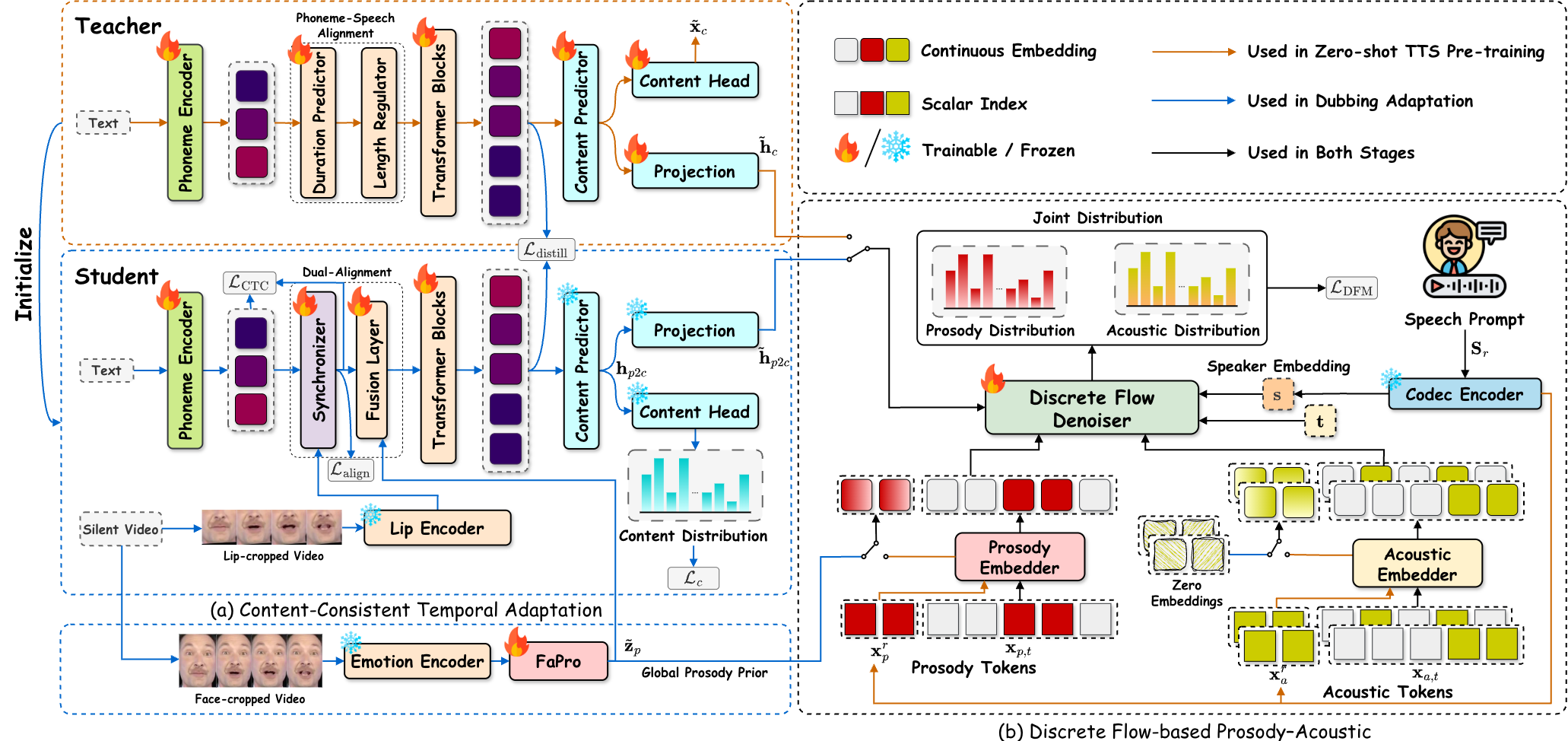

Figure 2: Detailed of DiFlowDubber Pipeline. Our framework is a two-stage pipeline. The first stage performs zero-shot TTS pre-training, where a simple deterministic content modeling architecture efficiently captures linguistic structures (orange dashed box). For prosody and acoustic attributes, we adopt (b) Discrete Flow-based Prosody-Acoustic (DFPA) module to model expressive prosodic variations and realistic acoustic diversity from the corpus. In the second stage, the model is adapted to the video dubbing task, where (a) Content-Consistent Temporal Adaptation module transfers consistent content knowledge from the TTS domain and generates temporally aligned content representations. Meanwhile, FaPro extracts a global prosody prior from facial expression cues. The DFPA module then models the joint distribution of prosody and acoustic tokens conditioned on the global prosody prior, latent content representations, and speaker embedding.

Sample 1: So already we have a prediction from our quantum machanical understanding of bonding.

| Ground-Truth | HPMDubbing | StyleDubber | Speaker2Dubber | ProDubber | EmoDubber | DiFlowDubber |

|---|---|---|---|---|---|---|

Sample 2: In fact if we write the equilibrium expression for this we'll find the equilibrium constant is less than one.

| Ground-Truth | HPMDubbing | StyleDubber | Speaker2Dubber | ProDubber | EmoDubber | DiFlowDubber |

|---|---|---|---|---|---|---|

Sample 3: And we expect that we'll have an increase in that vapor intensity.

| Ground-Truth | HPMDubbing | StyleDubber | Speaker2Dubber | ProDubber | EmoDubber | DiFlowDubber |

|---|---|---|---|---|---|---|

Sample 1: Lay blue with d seven now.

| Ground-Truth | HPMDubbing | StyleDubber | Speaker2Dubber | ProDubber | EmoDubber | DiFlowDubber |

|---|---|---|---|---|---|---|

Sample 2: Lay blue by t eight now.

| Ground-Truth | HPMDubbing | StyleDubber | Speaker2Dubber | ProDubber | EmoDubber | DiFlowDubber |

|---|---|---|---|---|---|---|

Sample 3: Set white by i six please.

| Ground-Truth | HPMDubbing | StyleDubber | Speaker2Dubber | ProDubber | EmoDubber | DiFlowDubber |

|---|---|---|---|---|---|---|

We present qualitative comparisons between DiFlowDubber and baseline methods. The highlighted regions (in red boxes) indicate areas where different models show noticeable discrepancies, with our results remaining most consistent with the ground truth. This demonstrates that our approach effectively preserves pitch continuity and prosodic dynamics while maintaining alignment with lip movements in the corresponding video frames.

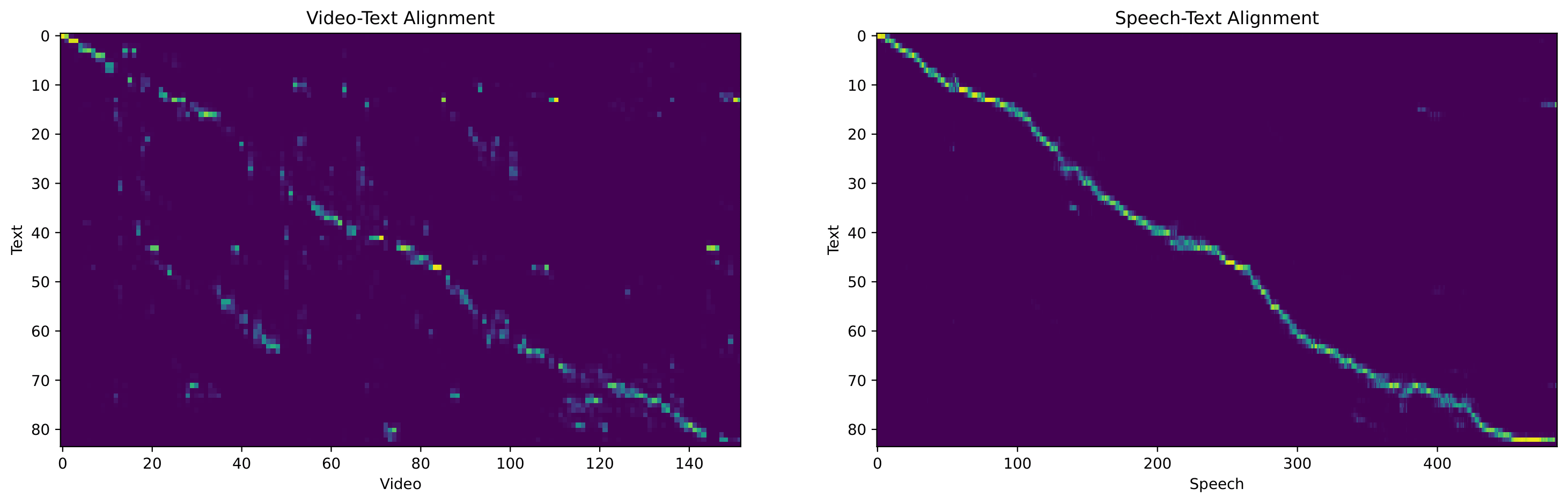

Visualization of the attention maps learned by the Synchronizer module. The left panel shows the video-text alignment between lip-frame features and phoneme embeddings, while the right panel shows the speech-text alignment between discrete speech tokens and phonemes.